Claude Code’s Source Leak: What Happened, Why It Matters, and What It Reveals About AI Engineering

Gulger Mallik

Software Engineer & AI Researcher

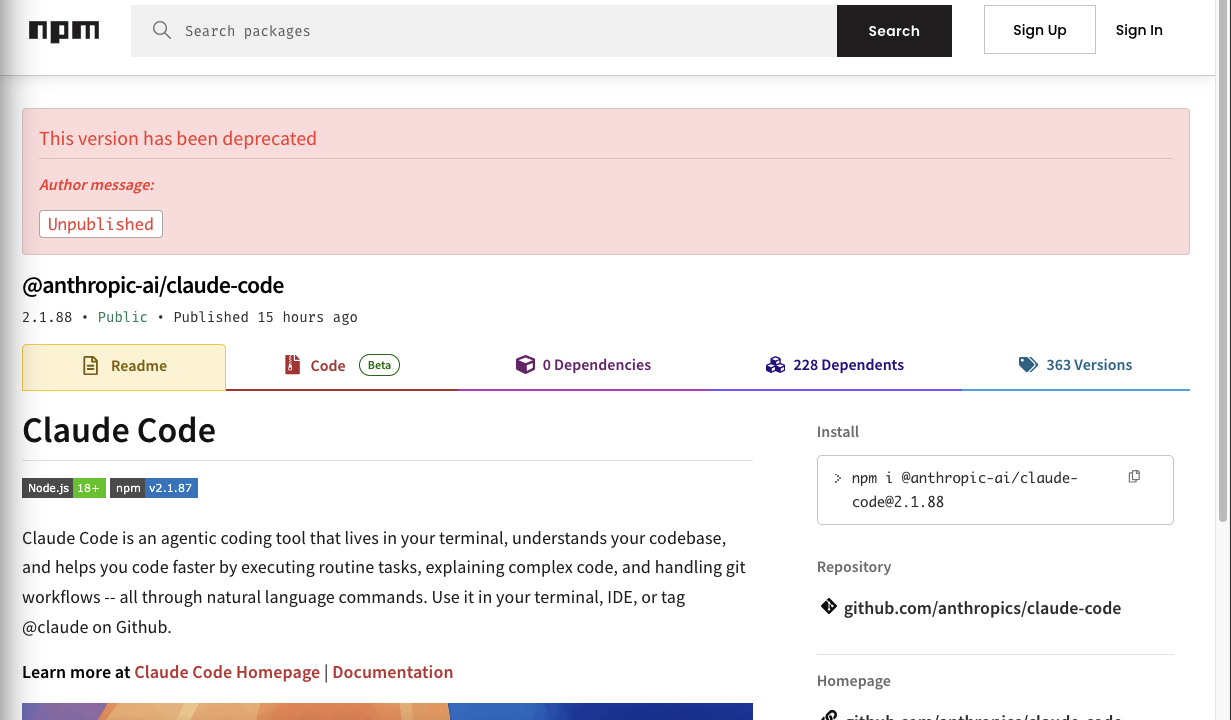

Anthropic accidentally exposed the full source code of its Claude Code CLI, revealing internal logic and future projects due to a build pipeline configuration error.

The Anatomy of an Accidental Disclosure

In a significant lapse in operational security, Anthropic recently leaked the entire unobfuscated TypeScript source code for its Claude Code command-line interface tool. The incident, which occurred due to a misconfigured npm release, resulted in the exposure of over 1,900 individual files comprising more than 512,000 lines of proprietary code. By including a source map file that was never intended for public distribution, the company essentially provided a roadmap of its internal engineering philosophy, debugging systems, and LLM orchestration strategies.

The leak spread with viral intensity across GitHub, with thousands of developers forking the repository before Anthropic could intervene. While the company acted quickly to remove the compromised package, the damage to their intellectual property perimeter was immediate. Anthropic has since confirmed that this was a result of human error during the packaging process, explicitly clarifying that no customer data, private API keys, or proprietary model weights were compromised in the incident.

Unveiling the Roadmap: Future Features and Internal Experiments

Perhaps the most intriguing aspect of the leak was the window it provided into Anthropic’s R&D pipeline. The code contained references to several unreleased features and internal experiments that suggest a much more ambitious vision for Claude Code than what has been publicly demonstrated. Key findings included:

- Kairos: A persistent background agent architecture designed to handle long-running tasks beyond the immediate interaction window.

- AutoDream: A sophisticated memory-consolidation system aimed at providing Claude with better context retention across extended coding sessions.

- Undercover Mode: A stealth-oriented execution layer, hinting at capabilities for background analysis or silent operation.

- Buddy: A playful, ASCII-art-based assistant character, revealing the human-centric design approach the team is exploring for user engagement.

Lessons for the AI Industry

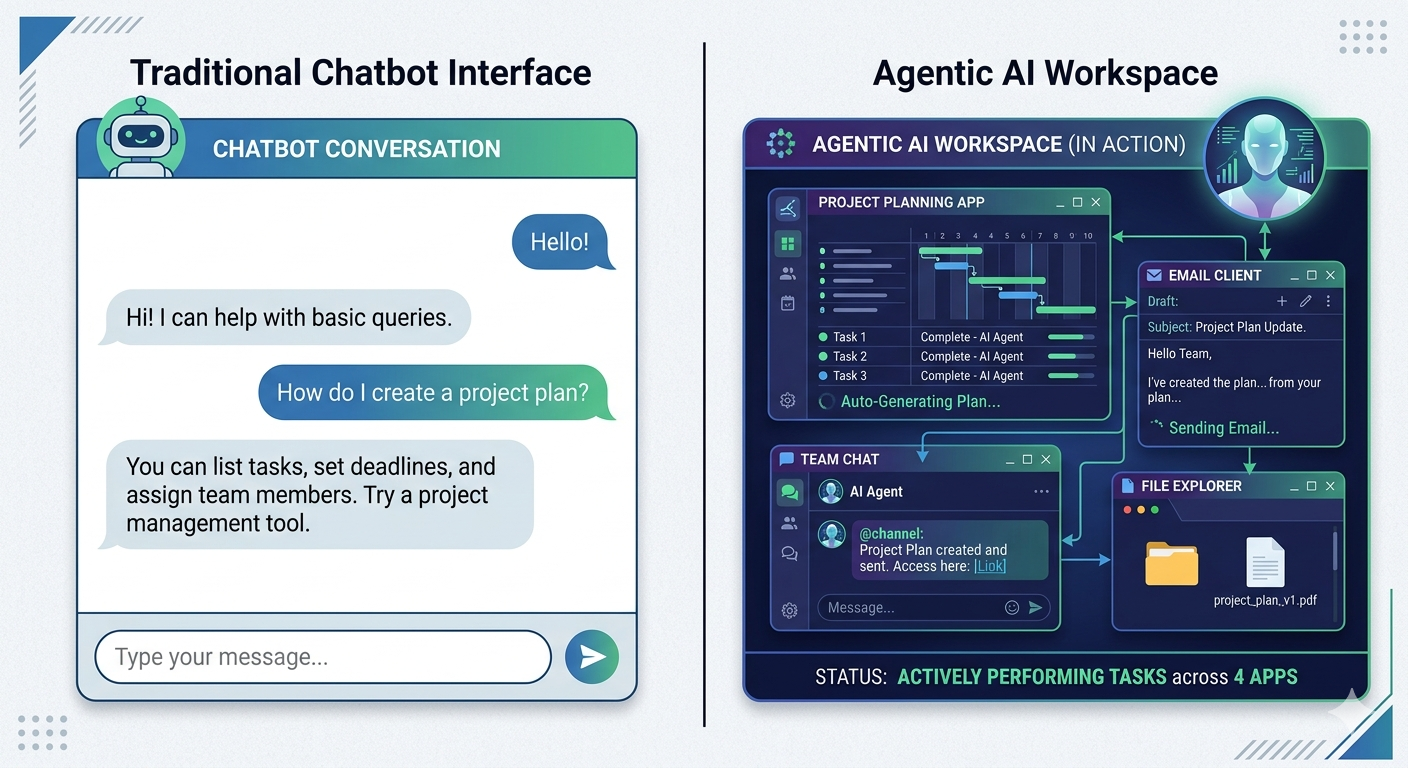

This incident serves as a stark reminder of the fragile nature of software supply chain security, particularly for AI-native companies. For competitors and security researchers, the leak is a goldmine. It offers a rare, granular look at how Anthropic structures its tool-use loops, manages safety filters, and optimizes the orchestration of LLM calls in a CLI environment. While the industry often debates the merits of open-source versus closed-source AI, this leak has effectively forced a degree of transparency that the company was not yet ready to share.

For the broader developer community, the incident highlights the critical importance of build-pipeline hardening. In an era where AI tools are becoming increasingly complex, the separation between development environments and production artifacts is not just a best practice—it is a mandatory security boundary. Anthropic’s experience underscores that even the most sophisticated AI companies are vulnerable to the simplest of administrative oversights.

The Future of AI Transparency

As the dust settles, the conversation has shifted toward the ethics of handling leaked proprietary code. Many in the community have refrained from using the leaked logic, citing concerns over intellectual property and the potential for legal repercussions. However, the event has reignited the debate about whether companies like Anthropic should adopt a more open approach to their tooling, given that the underlying mechanics are often discoverable through reverse engineering or accidental leaks.

Ultimately, the Claude Code leak is a humbling moment for Anthropic. It provides an opportunity for the company to refine its internal security audits and reconsider its release protocols. As they continue to push the boundaries of agentic coding assistants, the focus will undoubtedly shift toward ensuring that their technological edge is protected not just by intent, but by rigorous, automated verification of their deployment pipelines.

Related Articles

The Rise of the No-Code Architect

Explore how the No-Code Architect is bridging the gap between business logic and technical...

The Rise of the AI Orchestrator

Discover how AI orchestrators are transforming simple LLMs into complex workflow managers capable...

The Post-IDE Era: Coding with Autonomous Agents

Explore the transition from traditional IDEs to autonomous coding agents and how AI is redefining...

Reverse Engineering the AI Recruiter

Learn how to optimize your resume and application process by understanding the logic behind AI...

The End of Passive AI: Inside Perplexity Computer

Discover how Perplexity's new 'Computer' interface is redefining AI interaction by shifting from...

Ready to Build Something Amazing?

Let's collaborate on your next project and create solutions that make a difference.

Get In Touch