Unlocking Gemma 4 and Beyond

Gulger Mallik

Software Engineer & AI Researcher

Discover Google’s AI Edge Gallery, an on-device sandbox that brings powerful generative AI, including Gemma 4, to your mobile device with total privacy.

The Future of Mobile AI is Local

For years, generative AI has been synonymous with cloud-based processing. Sending sensitive data to massive server farms was the standard, but that paradigm is shifting. Google’s AI Edge Gallery represents a significant leap forward, offering an on-device sandbox that allows users to run sophisticated open-source models directly on their smartphones. By keeping the computation local, the platform ensures that your data never leaves your device, providing an unparalleled level of privacy and security.

Unlocking Gemma 4 and Beyond

The highlight of the current AI Edge Gallery release is undoubtedly the inclusion of Gemma 4. This lightweight yet powerful model is optimized to run efficiently on mobile hardware without compromising on reasoning capabilities. The gallery serves as a playground where developers and enthusiasts can experiment with:

- Multimodal image analysis for real-time visual understanding.

- On-device audio transcription for fast, offline speech-to-text.

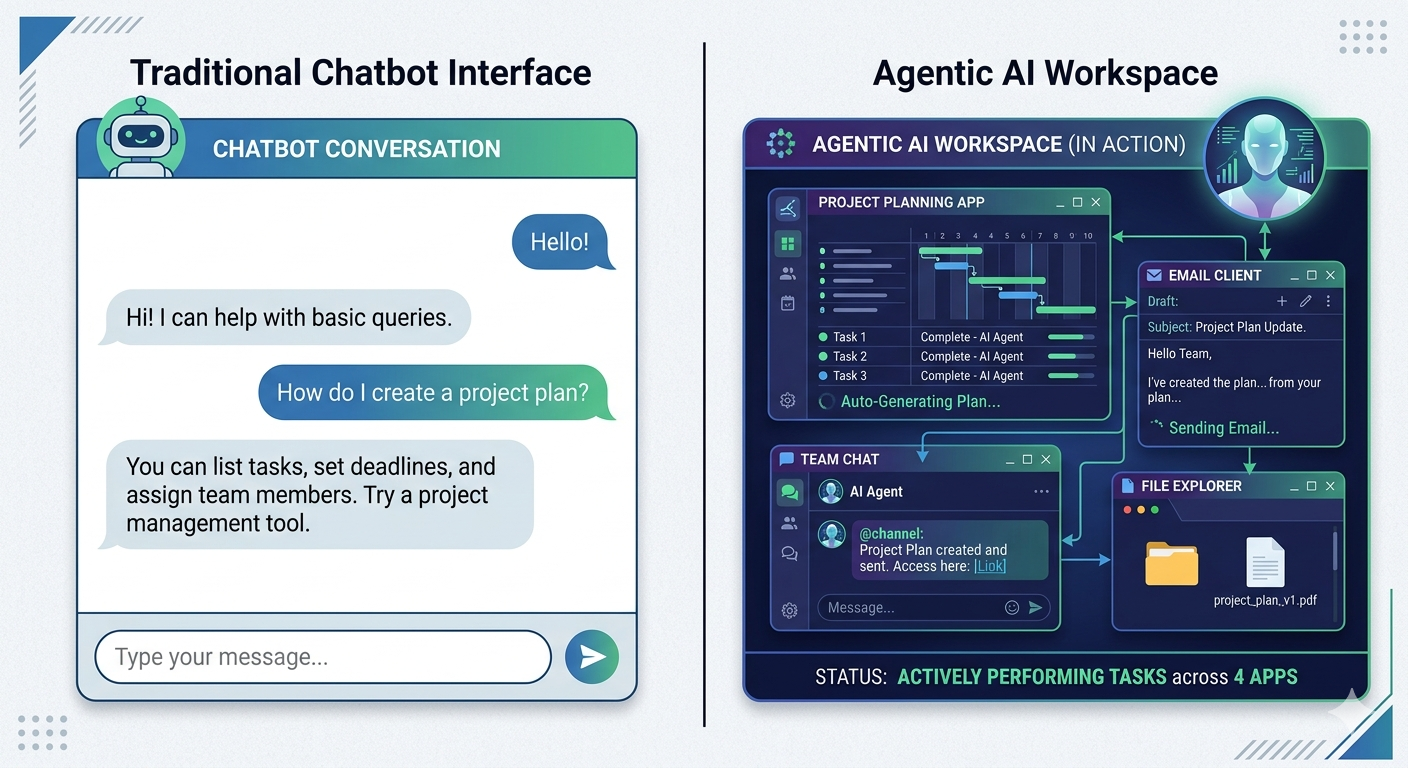

- Agent-style skills that can perform local device actions.

- Rapid prompt experimentation without latency.

Built for Developers and Enthusiasts

Google has designed this sandbox with accessibility in mind. Whether you are a seasoned machine learning engineer or an AI hobbyist, the platform offers a streamlined environment. It provides robust model management, benchmarking tools to measure performance, and support for loading custom models. By leveraging the LiteRT runtime, the gallery ensures that these complex neural networks operate smoothly across a range of hardware configurations.

Getting Started

The barrier to entry is lower than ever. The AI Edge Gallery is compatible with Android 12+ and iOS 17+, making it accessible to a wide array of modern mobile users. Installation is straightforward, allowing you to transition from setup to inference in minutes. This integration with Google’s broader AI Edge ecosystem means that developers can prototype locally and eventually deploy their models into production-grade applications with confidence.

As we move toward a future where AI becomes a daily utility, the shift toward local processing is inevitable. Google’s AI Edge Gallery isn't just a tool; it is a preview of a world where your phone is smart enough to handle the heavy lifting, all while respecting the sanctity of your private information.

Related Articles

The Rise of the No-Code Architect

Explore how the No-Code Architect is bridging the gap between business logic and technical...

The Rise of the AI Orchestrator

Discover how AI orchestrators are transforming simple LLMs into complex workflow managers capable...

The Post-IDE Era: Coding with Autonomous Agents

Explore the transition from traditional IDEs to autonomous coding agents and how AI is redefining...

Reverse Engineering the AI Recruiter

Learn how to optimize your resume and application process by understanding the logic behind AI...

The End of Passive AI: Inside Perplexity Computer

Discover how Perplexity's new 'Computer' interface is redefining AI interaction by shifting from...

Ready to Build Something Amazing?

Let's collaborate on your next project and create solutions that make a difference.

Get In Touch